Introduction

With the ever-growing adoption of cloud services, effective cloud management has become more crucial than ever. It’s not just about maximizing efficiency and minimizing costs - it's also about safeguarding your organization from potential financial damage due to security breaches.

In this post, we'll delve into a blend of robust cost optimization strategies and critical security measures.

These cloud management best practices could potentially save your organization millions of dollars while bolstering the overall integrity of your operations.

So, whether you're looking to tighten your budget, or fortify your defense against security threats, these insights are designed to deliver both cost savings and peace of mind. Let's dive in!

What is Cloud Management?

Cloud management refers to the process of overseeing and optimizing resources, operations, and services within a cloud computing environment. As businesses increasingly adopt cloud-based solutions, effective cloud management has become an essential skill.

Important aspects of cloud management include:

- Resource allocation and optimization: Ensuring resources are available and efficiently utilized to minimize costs.

- Security and compliance: Implementing robust security measures to protect sensitive information and comply with relevant regulations.

- Performance monitoring: Maintaining optimal user experiences and addressing any issues promptly.

- Cost management: Monitoring and controlling cloud expenditure to identify cost-saving opportunities.

- Automation and orchestration: Streamlining routine tasks to increase operational efficiency.

- Disaster recovery and backup: Regularly backing up data and implementing recovery strategies for potential service disruptions.

- Governance and policy management: Establishing clear policies and guidelines for cloud usage.

As cloud adoption continues to grow, Cloud Management has become vital to maintaining a secure, efficient, and cost-effective infrastructure.

What are the Benefits of Cloud Management?

The benefits of cloud management extend far beyond cost savings and mitigating financial risk.

While cost optimization remains a compelling advantage, businesses also gain enhanced resource optimization, scalability, and flexibility.

When done well, cloud management can effectively allocate and utilize resources, streamline operations, and respond swiftly to changing business needs. Additionally, the financial visibility and control provided by cloud management empower businesses to make informed decisions, accurately allocate costs, and plan budgets effectively.

Cloud management brings transparency and accountability, enabling businesses to optimize resource utilization, improve performance, and drive innovation. It acts as a catalyst, unlocking the true power of the cloud and propelling businesses toward success in the digital age.

3 Essential Cloud Management Best Practices

Let’s take a look at 3 high-level best practices that could, if not followed, cost your organization millions of dollars.

1. Don't Take Cloud Security For Granted

The average cost of a data breach in 2020 was $3,860,000 USD according to a recent report from IBM.

Cryptojacking is another common attack, where an attacker gains credentials to your cloud and spin up resources to mine cryptocurrency, potentially spending millions in compute power (find out more).

Let’s go over a few basic security controls that your organization should have in place to prevent unauthorized access.

Restrict root access to 1-2 people

Root accounts are the equivalents of super admin in your cloud, the username or password for this account should be locked up safely. A disgruntled employee or hacker could use these credentials to wreak havoc in your cloud.

One option is to give access to the root password to a team member, and then pair the multi-factor authentication (MFA) with another employee’s device who cannot see the password. Effectively instilling a two-man rule for each root login (think nuclear submarine). CSPs have methods of recovering the account in the event the password/MFA holder are moved.

Require MFA for every employee (especially root)

Some organizations may avoid this due to the inconvenience of having to check a code on your phone every time you log in.

MFA is still one of the most effective ways of preventing unauthorized access, we’d recommend looking into physical MFA options like YubiKey for a more convenient MFA option.

Enable and use API & activity logging

In AWS, this is what CloudTrail provides. The audit trail that CloudTrail provides will be vital during incident investigations. At a minimum, each account should have its own s3 bucket with all of the CloudTrail logs (and a storage lifecycle). Access to the bucket and trail should be restricted to security personnel to prevent tampering or accidental deletion.

For environments with higher security requirements, consider centralizing CloudTrail logs in a separate “logging” account. This account could also include a security information and event management (SIEM) tool. Always establish a data retention/archival policy for logs in the SIEM as costs can quickly skyrocket if the data is left unchecked.

Use leased roles and integrate authentication with your identity provider (IdP)

A key benefit of IdP integration is that you won’t have to worry about employees retaining access to your cloud after they’ve left the company. Take advantage of your SSO/LDAP/AD to simplify access provisioning. Better yet, utilize leased roles for all day-to-day activities. Instead of logging into an IAM user, which is presumably going to have the same access key for X days, you’ve been granted temporary credentials for 2 hours.

The method of doing this will vary depending on your CSP and identity provider/SSO, Okta is one of the simplest to set up, and there are also workarounds to integrate with other providers like Google Workspace. In most cases, a quick search will identify the steps you need to take.

Here is more info on the AWS SSO Okta Integration, and the AWS SSO Gsuite Integration.

Follow least-privilege principles for users and networks

Overprivileged users with good intent can still cause damage to your production networks if left to their own devices. You can expect non-networking professionals to assign public IPs, create lax security groups, and spin up resources with little consideration for cost or security. Limit IAM privileges to admins to prevent users from bypassing authentication controls.

Least-privilege should also apply to your networks. Restrict ingress always, and restrict egress too! Over-utilize your stateless (Network ACL) and stateful (Security Groups) firewalls. When possible, throw in a load balancer with a web application firewall (WAF) and a NAT gateway and hide your web servers safely in private subnets.

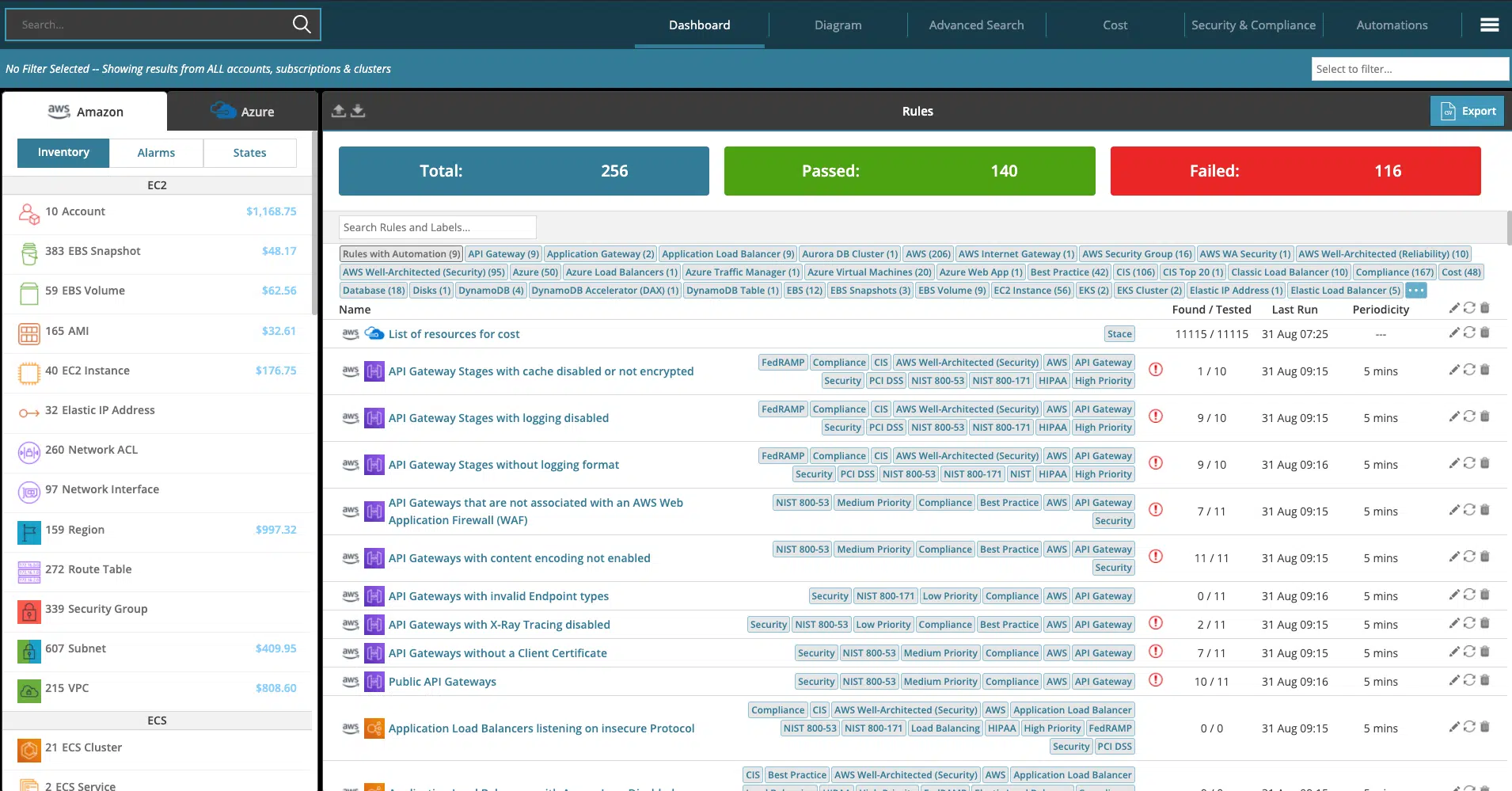

Hyperglance is particularly effective at quickly identifying unsecured resources. It has over 200 built-in rules that will identify security holes and compliance failings in real time.

Interested in product updates, cloud news and tips?

Join 5,700+ cloud professionals who have already signed up for our free newsletter.

By subscribing, you're agreeing that Hyperglance can email you news, tips, updates & offers. You can unsubscribe at any time.

2. Find Underutilized Resources & Data

Underutilized or unnecessary cloud resources can easily drive up costs.

As your organization grows, the overhead tied to underutilized resources will also grow if you’re not careful.

Let’s look at a few common scenarios.

Overprovisioned Resources

Rarely do engineers deploy instances to the exact specifications required by the apps running on them. There may be opportunities to downsize instances and clusters where CPU, memory, and storage aren’t being fully utilized.

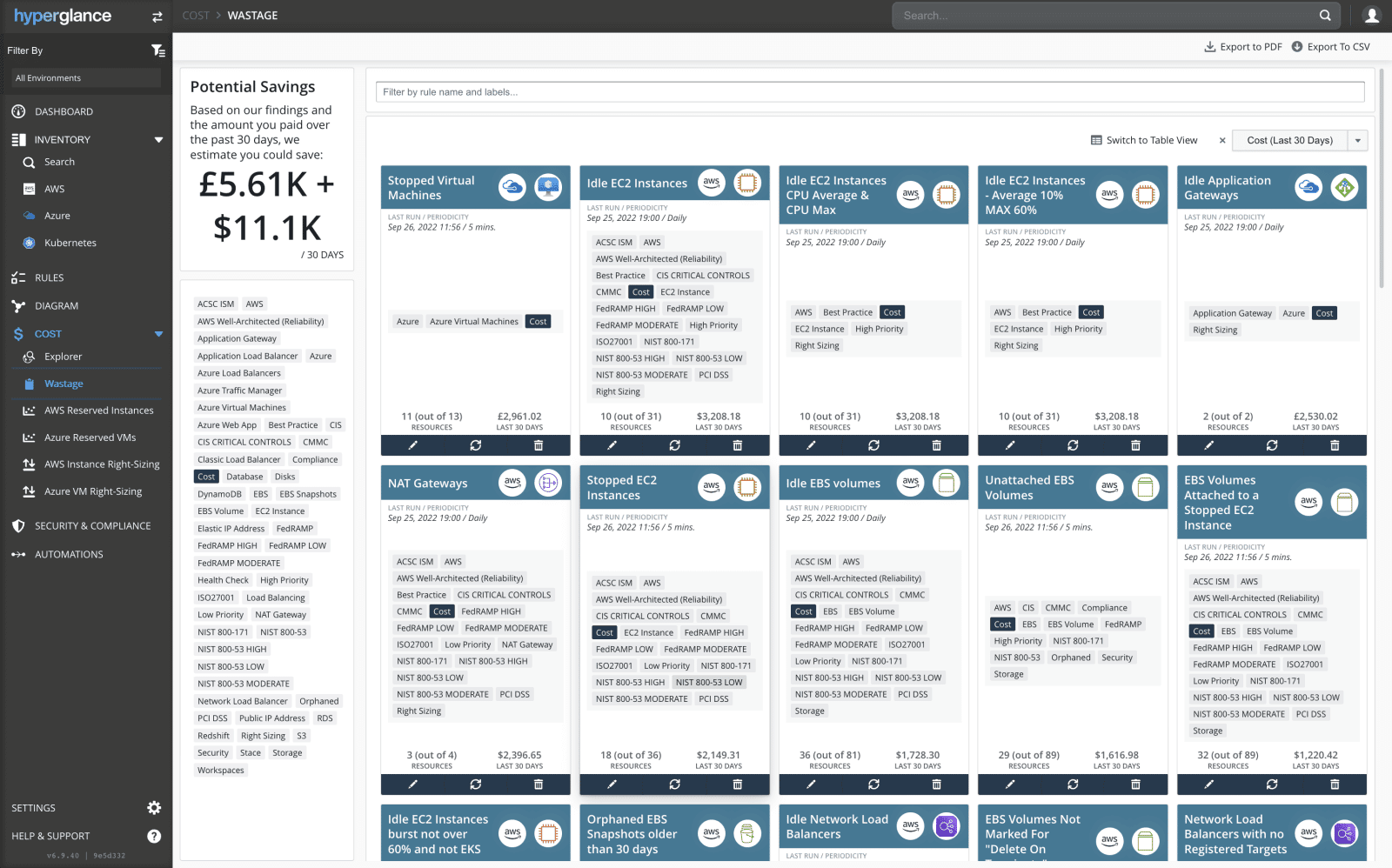

Hyperglance’s cost dashboard conveniently identifies these resources for you as shown below:

Sandbox & test instances left running

Every cloud needs a sandbox environment, usually a separate account, which will help you distinguish what’s “live” or in production, and what is still being deployed & configured.

Busy engineers may forget to spin a sandbox resource down, leaving potentially costly assets running in limbo.

Best practice is to build automation to ensure your sandbox resources aren’t accumulating.

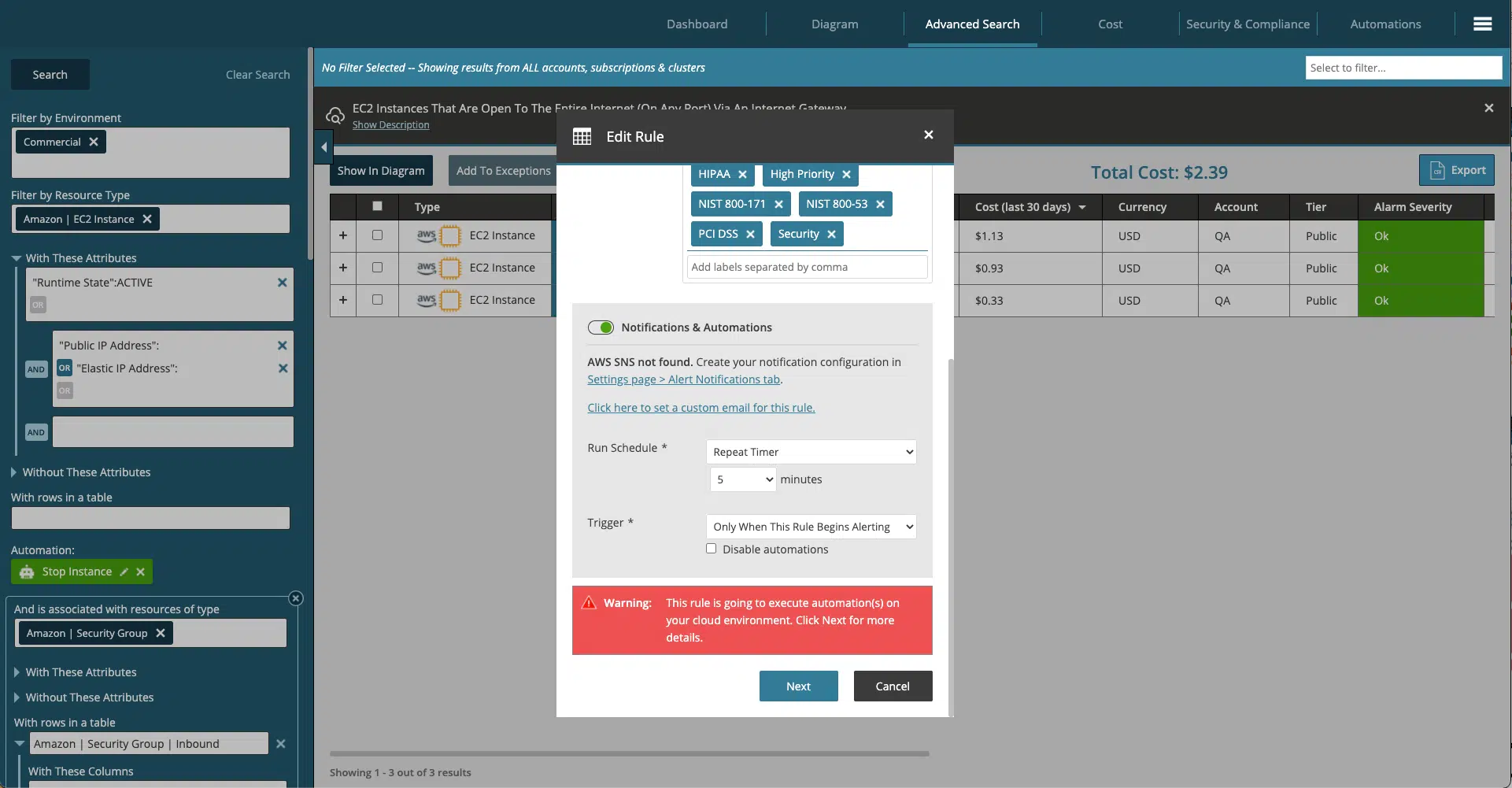

Hyperglance’s rules engine, paired with its built-in automation, enables you to filter resources by environment and create a cleanup job in minutes!

Storing data without lifecycle policies

The cost of storing cloud data varies depending on use case and accessibility. Data costs can skyrocket when tied to other systems like a Security Incident & Events Manager (SIEM), usually in the form of Elasticsearch or Splunk.

Create a data retention policy, your organization’s cybersecurity team or regulatory compliance guidance should tell your engineers what types of data need to be retained (snapshots, backups, logs) and for how long.

3. Don't Overuse On-Demand Pricing

On-demand pricing is typically one of those things most cloud professionals know is costly, but are hesitant to move away from possibly due to unpredictable environment changes, or growth.

There are a few ways to tackle this issue without leaving money on the table.

Understand the cost differentials

Research the different options available with your CSP, AWS for example offers 1 and 3-year reservation with no upfront, partial upfront, and full upfront options in addition to spot instances.

AWS’ reserved instances can save you up to 72% compared to on-demand pricing, spot instances can save up to 90%!

Conduct quarterly cloud workload reviews

Once every three months, take some time with the engineering team to go through your cloud workloads, focusing on the high-cost resources first.

While reviewing, ask the questions “do we need this” and if so “will this requirement still exist in 1-3 years?”. If the answer is yes, annotate the instance type and expected duration.

Add on-demand pricing vs reservation pricing and at the end of this exercise, you’ll have a list to present to your CFO that strongly justifies upfront pricing.

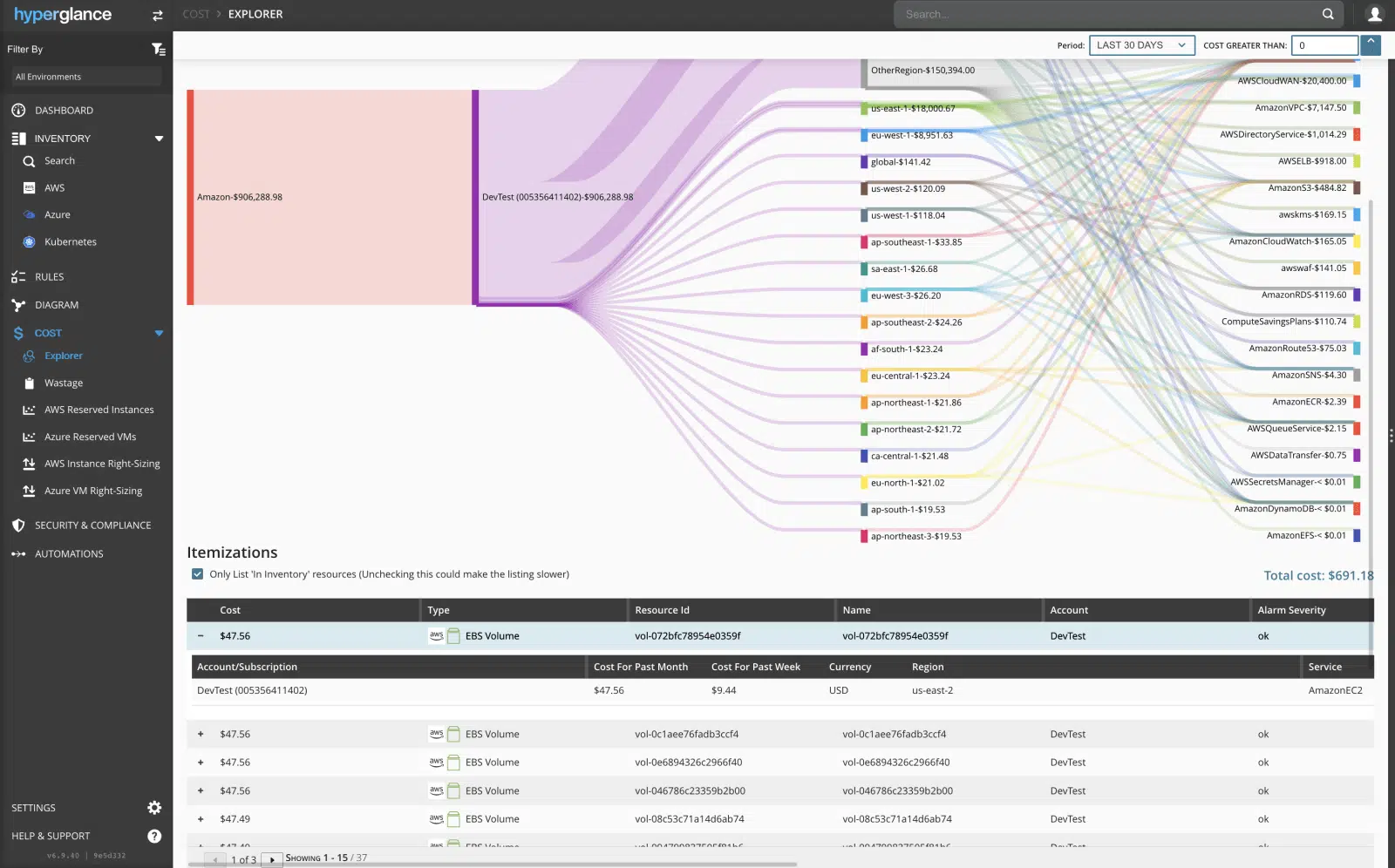

Hyperglance’s interactive cloud diagram, inventory list, and cost explorer are particularly useful for this exercise, enabling you to see costs for different environments right away and drill down as needed.

Consider working with a reseller

If your team is small or overburdened, it may make sense to pass off the management of instance reservations to a reseller.

Resellers purchase instance reservations on your behalf and then apply them across multiple customers, the more customers they have, the less risk involved.

Typically you’ll see pass-thru savings of 4-15% with the added benefit of not having to manage your own instance reservations.

Find The Right Balance

Perhaps you’re working with Kubernetes clusters or EC2 auto-scaling groups as well as EC2 instances.

It’s important to find the right balance of on-demand, reservation, and spot options for each environment.

For example, you’re tasked with saving money on a cluster that has three t3.xlarge ($0.1664) master nodes, and a minimum of three t3.xlarge worker nodes at all times but can scale to up to 10 worker nodes as needed. The cluster is also expected to still be in use over the next 12 months.

The most cost-effective method would be to purchase 1-year reservations for the three master and three worker nodes, saving 41% each, and then configure the cluster to prioritize spot instances ($0.0499 per Hour) when scaling, with on-demand as a backup if spot instances aren’t available.

The Best Cloud Management Platforms

When you're looking for the best Cloud Management Platform (CMP) for the job, there are some key aspects that are often found in the top-tier of solutions.

- Comprehensive Cloud Support: The best platforms are designed to support multiple cloud providers such as Amazon Web Services (AWS) and Microsoft Azure, including your K8s setup. They provide a unified interface and management capabilities for different cloud environments, allowing users to manage and monitor their resources seamlessly.

- Centralized Management: These platforms offer centralized management and control over cloud resources, enabling users to provision, configure, and monitor their cloud infrastructure from a single interface. They provide a holistic view of all resources, including virtual machines, storage, networking, and applications.

- Automation & Orchestration: Automation is a crucial component of cloud management platforms. They provide tools for automating tasks, such as resource provisioning, scaling, and application deployment. Additionally, they support orchestration to coordinate complex workflows and workflows involving multiple cloud services.

- Cost Optimization: The best cloud management platforms offer cost optimization features to help users optimize their cloud spending. They provide insights into resource usage, cost allocation, and recommendations for rightsizing resources, identifying unused or underutilized resources, and implementing cost-saving measures.

- Security & Compliance: These platforms prioritize cloud security and compliance by providing robust features to manage access control, encryption, data protection, and compliance auditing. They integrate with existing security tools and frameworks, allowing users to enforce security policies across their cloud infrastructure.

- Monitoring and Analytics: They offer monitoring and analytics capabilities to provide real-time visibility into cloud performance, resource utilization, and application health. These platforms generate alerts, metrics, and logs to facilitate troubleshooting, performance optimization, and capacity planning.

- Scalability & Flexibility: Cloud management platforms are designed to handle large-scale deployments and support the growth of cloud infrastructure. They should provide scalability, allowing users to manage resources efficiently as their needs evolve. They should also offer flexibility in terms of customization and extensibility to adapt to diverse requirements.

- User-Friendly Interface: Cloud infrastructure can be overwhelming in size, partly why managing it is challenging. The best platforms prioritize user experience and provide an intuitive and user-friendly interface. They offer dashboards, visualizations, and self-service portals to simplify the management of cloud resources, making it easier for users to navigate and interact with the platform.

- API & Integration Capabilities: Cloud management platforms should provide robust APIs and integration capabilities to facilitate integration with existing tools and systems. This allows users to automate workflows, extract data, and integrate the platform with other management and monitoring tools they use.

Hyperglance - Cloud Management You Control

Hyperglance gives you complete cloud management enabling you to have confidence in your security posture and cost management whilst providing you with enlightening, real-time architecture diagrams.

Monitor security & compliance, manage costs & reduce your bill, interactive diagrams & inventory, built-in automation. Save time & money and get complete peace of mind.

Book a 30-minute demo today, or experience it all, for free, with a 14-day trial.

About The Author: Stephen Lucas

As Hyperglance's Chief Product Officer, Stephen is responsible for the Hyperglance product roadmap. Stephen has over 20 years of experience in product management, project management, and cloud strategy across various industries.